In today’s topic let’s talk about section “Administration” => “Queue”.

Zabbix queue also called delayed metrics represents data that is currently missing inside the monitoring tool.

In this post we will:

1) Remind ourselves of basic prerequisites across monitoring components

2) How queue section works

3) Algorithm to make things better

4) Troubleshoot agent hosts which support active checks only

Basic prerequisites

For the perfect setup we need to ensure that:

The time between Zabbix server and Zabbix proxy is correct.

$ timedatectl

Local time: Thu 2021-03-18 19:38:14 EET

Universal time: Thu 2021-03-18 17:38:14 UTC

RTC time: Thu 2021-03-18 17:38:14

Time zone: Europe/Riga (EET, +0200)

System clock synchronized: no

NTP service: inactive

RTC in local TZ: no

Time zones can differ across components but universal time must be the same.

If passive checks are available for Zabbix agents, then monitoring software already knows how to keep time correct. This is implemented by comparing local time of remote machine with the timestamp of check execution. This is the formula to determine if time of the agent machine has shifted away more than 3 minutes:

{Linux by Zabbix agent:system.localtime.fuzzytime(3m)}=0

If an agent machine supports only active checks then check post Detect if time is off by active Zabbix agent for a solution.

How queue section works?

The queue section is highly related to server uptime:

# systemctl status zabbix-server

● zabbix-server.service - Zabbix Server

Loaded: loaded (/usr/lib/systemd/system/zabbix-server.service; enabled; vendor preset: disabled)

Drop-In: /etc/systemd/system/zabbix-server.service.d

└─override.conf

Active: active (running) since Mon 2021-03-01 11:10:35 EET; 3 weeks 0 days ago

Main PID: 28655 (zabbix_server)

If a master server gets restarted then it will create an illusion that everything is OK. It does not solve the queue.

Algorithm to make things better

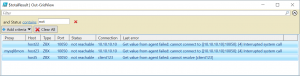

If queue represents a big count of metrics then most likely some hosts are not reachable at all. I recommend solving all red hosts first:

It’s very easy to use Windows PowerShell to Summarize devices that are not reachable. Plus it will show an exact error message.

This is possible mainly because PowerShell provides on-the-fly filtering to work with data in the output. All we need to do is tell that we are interested in “Status”: “not reachable” and look at the error message:

List all missing items

This can be useful if a queue is big and there are some hosts which are using active checks only.

Step 1: Use Zabbix API to obtain session key:

curl http://127.0.0.1/api_jsonrpc.php -s -X POST -H 'Content-Type: application/json' -d \

'{"jsonrpc":"2.0","method":"user.login","params":{"username":"Admin","password":"zabbix"},"id":1}' | \

grep -E -o "([0-9a-f]{32,32})"

For example, if you access dashboards via URL ‘http://192.168.88.20/zabbix.php?action=dashboard.view&dashboardid=18’,

Then path for Zabbix API is ‘http://192.168.88.20/api_jsonrpc.php’. In output, it will print a session key that must be used in upcoming steps:

2fbf06f496529c68bce2c94f94a0531a

Step 2: Test if we can get ITEMIDs by using this session token:

zabbix_get -s 127.0.0.1 -p 10051 -P plaintext -k '{"request":"queue.get","sid":"2fbf06f496529c68bce2c94f94a0531a","type":"details","limit":"999999"}'

The IP address ‘127.0.0.1’ is the address of the machine where service ‘zabbix-server’ is running. In the output will be similar to:

{"response":"success","data":[

{"itemid":322185,"nextcheck":1614589895},

{"itemid":322191,"nextcheck":1614589895},

{"itemid":322188,"nextcheck":1614589895},

{"itemid":322187,"nextcheck":1614590435}

],"total":4}

If the previous step was successful then put the content in an external file:

zabbix_get -s 127.0.0.1 -p 10051 -P plaintext -k '{"request":"queue.get","sid":"2fbf06f496529c68bce2c94f94a0531a","type":"details","limit":"999999"}' > /tmp/queue.json

Optional: Create a configuration file that allows passwordless access to the database using client utility.

Example for MySQL:

# cat ~/.my.cnf [client] host=127.0.0.1 user=zabbix password=zabbix

Example for PostgreSQL:

# cat ~/.pgpass 127.0.0.1:5432:*:zabbix:zabbix

Now we can export data to an external file. We would love to have a CSV file. The problem is that items themselves can have a comma inside. So a CSV will not be a good fit here. Let’s create a tab-separated value file.

On MySQL:

grep -oP 'itemid\":\K\d+' /tmp/queue.json | tr '\n' ',' | sed 's|.$||' | xargs -i echo "

SELECT p.host AS proxy, hosts.host, items.key_

FROM hosts

JOIN items ON (hosts.hostid = items.hostid)

JOIN hosts proxy ON (hosts.proxy_hostid=proxy.hostid)

LEFT JOIN hosts p ON (hosts.proxy_hostid=p.hostid)

WHERE items.itemid IN ({})

;" | mysql -sN --batch nameOfZabbixDB > /tmp/queue.tsv

On Postgres, at first, we will create an output with a “TabSep” string as field separator, then convert this string to a real tab separator:

grep -oP 'itemid\":\K\d+' /tmp/queue.json | tr '\n' ',' | sed 's|.$||' | xargs -i echo "

SELECT p.host AS proxy, hosts.host, items.key_

FROM hosts

JOIN items ON (hosts.hostid = items.hostid)

JOIN hosts proxy ON (hosts.proxy_hostid=proxy.hostid)

LEFT JOIN hosts p ON (hosts.proxy_hostid=p.hostid)

WHERE items.itemid IN ({})

;" | psql -t -A -F"TabSep" nameOfZabbixDB | sed "s%TabSep%\t%g" > /tmp/queue.tsv

TSV output can be imported into any spreadsheet software for data parsing.

That is it for today. Bye.

Prev Post

Prev Post