Aggregate checks allow you to gather data from items within one or multiple host groups. Therefore, you can get overall information about your group, instead of one single host.

Watch the video now.

Contents

I. Introduction (0:43)

II. How to (2:08)

1. Defining hosts (2:08)

2. Creating new host (optional) (3:28)

3. Creating aggregate item (4:37)

4. Defining aggregate item key (4:50)

5. Defining aggregate item attributes (7:26)

6. Aggregate checks results (11:45)

III. Important note (13:25)

Introduction

In this post, I’m going to explain how to run aggregate checks in Zabbix.

What do the aggregate checks actually do? Aggregate checks calculate some value, maybe the minimum, or maximum, or sum, or average from an item key that you specify within one or multiple host groups.

Aggregate checks are not actually collecting any data from the hosts, so no polling or trapping is performed. Aggregate checks are run on the side of the Zabbix server with the data which was already collected from the host by any other item types.

How to

Defining hosts

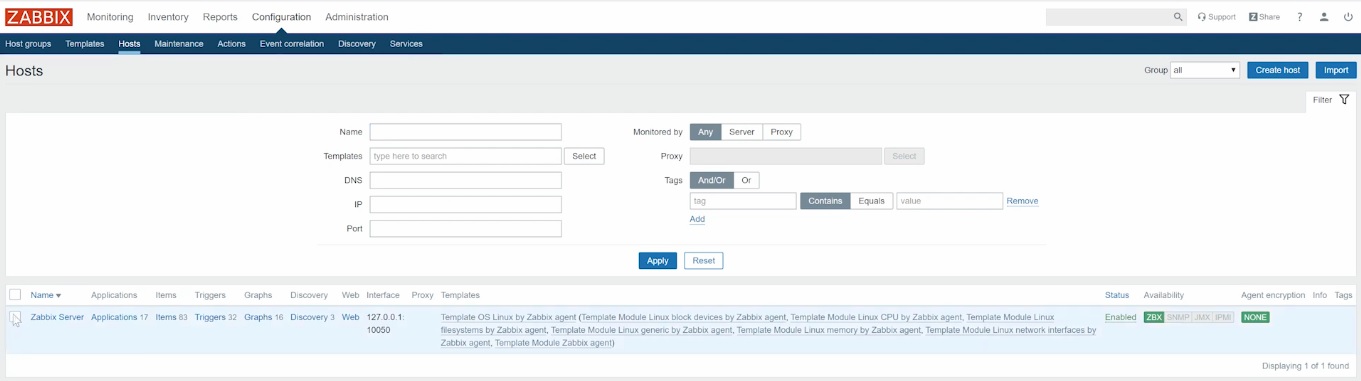

In my demo installation, I have only one Zabbix server in the Linux servers host group.

Zabbix server host

For the sake of demonstration, I will clone this Zabbix server host. To do that, click the host link, then press Full clone, type the new host name, and press Add. I’ll name these clones Zabbix server 1 and Zabbix server 2. These three hosts are collecting the data from one agent, installed on my virtual machine.

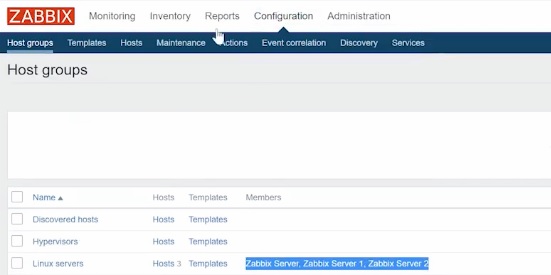

Each of these hosts is inside the host group ‘Linux servers‘. To check it, go to Configuration > Host groups.

Servers assigned to the Linux servers group

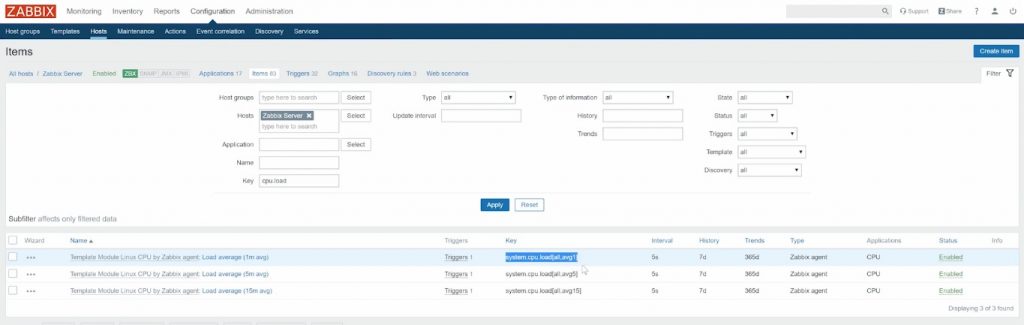

As an example, let’s create CPU Load monitoring Key for each of these hosts. Now we have the key ‘system.cpu.load[all,avg]‘, which is calculating average per minute.

Host key

Creating new host (optional)

We have three hosts, which means that we have three items. Aggregate checks can help us to calculate some aggregate data in all host groups. So we need to create an aggregate item.

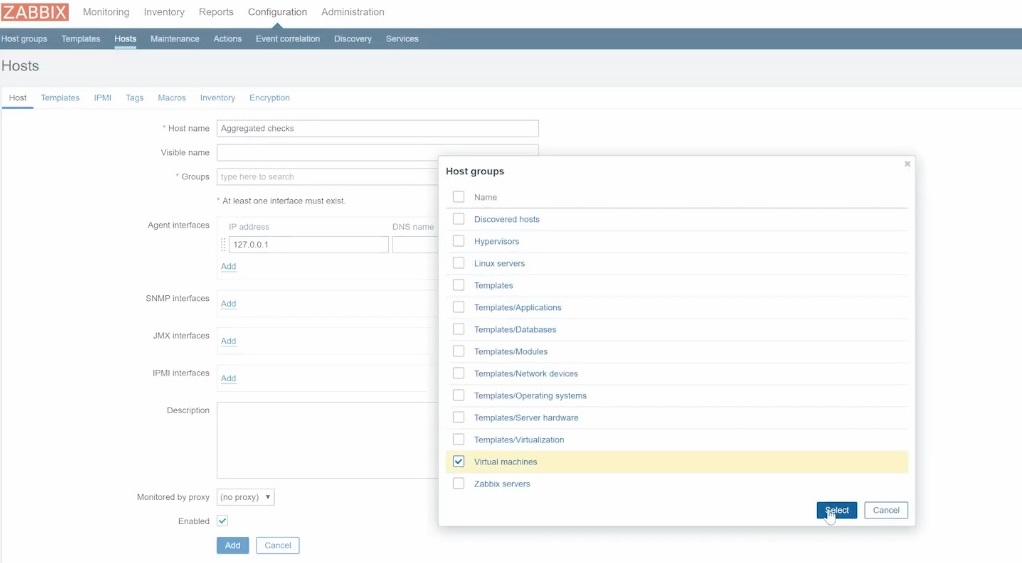

You can create an aggregate item in any of existing hosts, or create a new one. To do that, go to Configuration > Hosts and press Create host. You can set ‘Aggregate checks‘ as the Host name, for example.

Remember that host must be linked to at least one Host group. The host doesn’t have to be in the same group as our 3 test hosts.

Selecting host group

Agent interfaces do not matter as the aggregate checks are working with the data which was already collected. There won’t be any connections to the host, so IP address or DNS name doesn’t matter.

However, you must leave at least one interface active. Otherwise, you won’t be able to create a host.

Then click Add to create ‘Aggregate checks‘.

Creating aggregate item

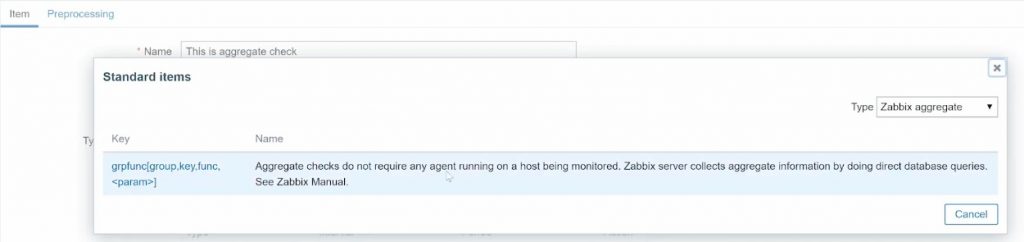

To create an aggregate item, click Items inside Aggregate checks or any other host and press Create item. For the Type, you need to select ‘Zabbix aggregate‘.

Defining aggregate item key

We can define the item attributes referring to documentation on Zabbix aggregate checks.

So the syntax of the aggregate item key is:

groupfunc["host group","item key",itemfunc,timeperiod]

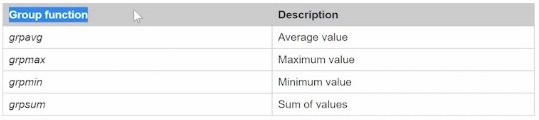

First, you can select any of the supported group functions.

Supported group functions

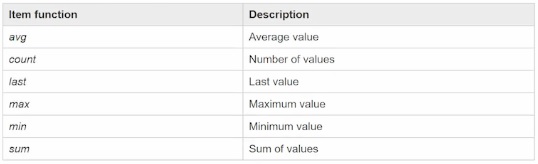

Then you need to define host group, inside which you need to calculate the data, item key from which you will calculate the data, and your item function.

Supported item functions

You can define time period only if you use the required item function. For example, if you take ‘last‘ in the item function, it will be one last received value, so the time period doesn’t really matter.

If you choose the ‘max‘, ‘count‘, or ‘avg‘ then you need to specify the time period.

Keep in mind that you can define only the actual time period in seconds minutes or hours. It is not possible to define a range of the values that you want to take into account.

NOTE. It is also possible to calculate the average value of multiple host groups. In our example, we were doing just Linux servers group with three clone Zabbix server hosts. You can also include other host groups if you have them.As it is specified in the documentation, each host inside the selected host group with the key specified in the aggregate checks is taken into account.

Let’s say if I have 10 hosts in the Linux servers host group and only five of them have ‘system.cpu.load‘ key, then only five of them will be included in the group average. The rest will be simply ignored.

Your items still will be supported, you will be collecting the data, but instead of ten hosts, you will be getting an average of five hosts. This makes sense because if you are collecting an average on a host group it doesn’t mean that all of the hosts should have the same key.

Defining aggregate item attributes

- Set the item name, for example, ‘This is aggregate check‘.

- Select the Type — ‘Zabbix aggregate‘.

- Define the Key syntax by pressing Select and choosing the required syntax.

Item key selection

- Browse the group function from the documentation if needed. For our demonstration, we select sum as the supported group function.

- Then comes the host group, which is case sensitive, so I recommend to go Configuration > Host groups and copy-paste the needed Host group name.

NOTE. Don’t forget to put the host group name in quotes and end it with a comma.

- The next parameter — the key from which we will calculate the sum. In our example, it’s the ‘system.cpu.load[all,avg]‘ average for one minute. The key should be the exact match as well, so I recommend you to open the Host, find the key, and copy-paste it.

Note. After you copy-paste the key, don’t forget to replace brackets by quotes to avoid misleading Zabbix.

- Function can be copied from the Item function section in the documentation.

Function refers to the calculation of the items, not host groups. In my example, we have three hosts with three items of ‘system.cpu.load‘, and we have to decide, which values we want to take from every host. For example, only one last received value or the average of ‘system.cpu.load‘, for five minutes.

For our demonstration, we’ll take the ‘last‘ which means that the last received value of the key will be accounted for.

So we’ll have three values as we have three hosts inside Linux servers group, and those three values will be summed up.

- The last parameter — <param> is in pointy brackets, so it can be ignored, since I am using the ‘last‘ item function, accounting for the last received value. So <param> can be deleted.

4. Type of information will be ‘Numeric float‘ since we’re talking about CPU load.

5. We’ll have no units.

6. I’ll set up Update interval for our demo to ten seconds to make it quicker.

Now we can Add the new item.

Note. I will rename my ‘Aggregate checks‘ to ‘Load average‘ so that it will be easier to show you in the Latest data.

Now we can open the CLI and run:

zabbix_server -R conig_cache_reload

to speed things up. Go to Configuration > Hosts, select the item created and click Mass update, then Check now to ensure that the check is run successfully.

Aggregate checks results

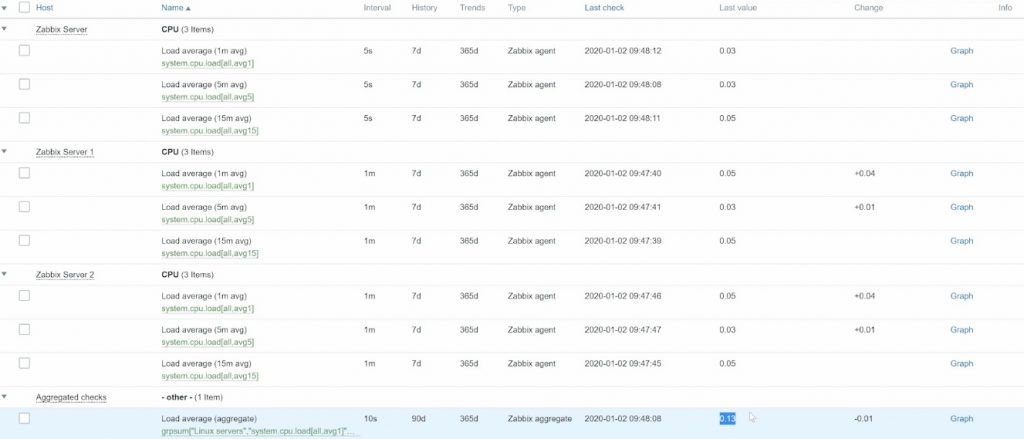

In the front-end, go to Monitoring > Latest data, select Host groups, Hosts and search for Load average.

Aggregate values

In this window, we have our Aggregate checks and our three duplicated hosts. As we calculate the load average value, the aggregate load value equals the sum of every host load value.

Aggregate value of different host values

If we add a new host to our Linux servers group, then they will be automatically taken into account inside the Aggregate checks. If we add some new hosts that will not have the ‘system.cpu.load‘ key, then they will not be accounted for. If we eventually delete this item key, then this item will not be supported and you will have to fix it manually.

If you want to include another host group in the calculation, you have to manually edit your Aggregate checks.

Important note

Keep in mind that this aggregate value is calculated from the data which is already stored in the database. You are not collecting the data from the host, you are just taking it outside of the database.

So if you need some pre-processing, think twice before using it. If the pre-processing step was applied in the initial items that were collecting the data, then most likely you won’t have to apply it on your aggregate item.

That’s all for today. You are welcome to like, comment, and subscribe.

Prev Post

Prev Post

Hi Dmitry,

This was very useful. Thank you.